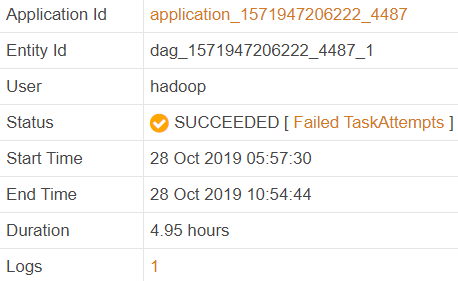

On one of the clusters I noticed an increased rate of shuffle errors, and the restart of a job did not help, it still failed with the same error.

The error was as follows:

Error: Error while running task ( failure ) :

org.apache.tez.runtime.library.common.shuffle.orderedgrouped.Shuffle$ShuffleError:

error in shuffle in Fetcher

at org.apache.tez.runtime.library.common.shuffle.orderedgrouped.Shuffle$RunShuffleCallable.callInternal

(Shuffle.java:301)

Caused by: java.io.IOException:

Shuffle failed with too many fetch failures and insufficient progress!failureCounts=1,

pendingInputs=1, fetcherHealthy=false, reducerProgressedEnough=true, reducerStalled=true